Ethical Ai Powered Regression Test Selection

Informal Summary

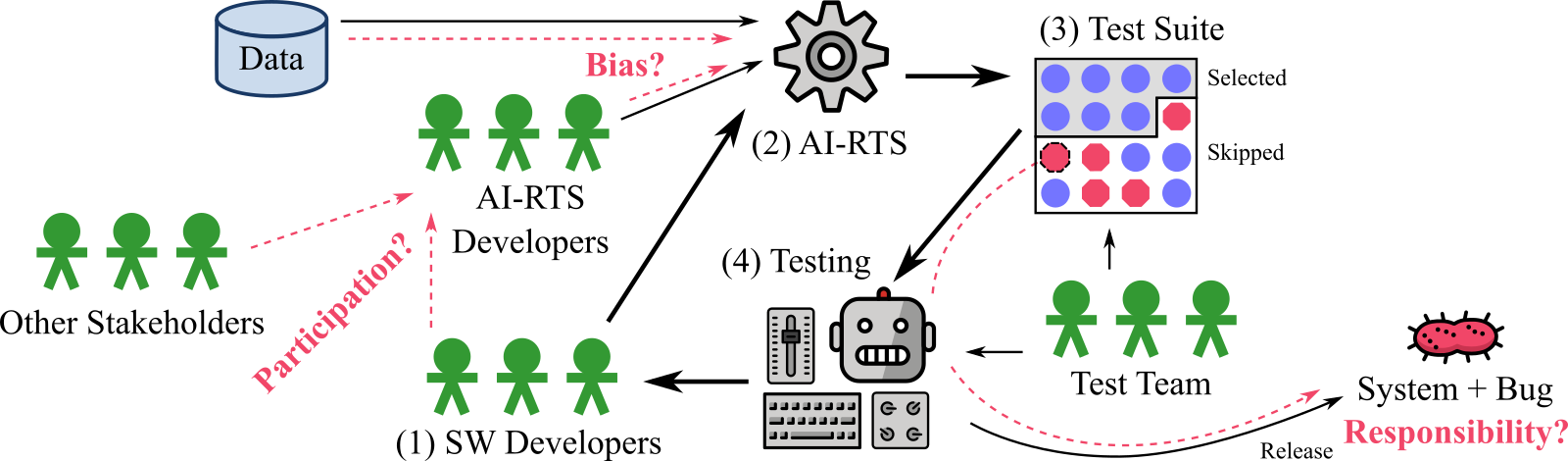

In this short paper (2-pages), we investigate ethical issues with under-performing regression test selection: Who is responsible if the test selection is poor and would explicability support taking responsibility? How can we know that an AI-powered or automated regression test selection is not biased and would supervision help? Have all the necessary stakeholders participated in the development and would diversity improve the tool?

Pre-print: from arxiv [1] (or here [2])

Slides from presentation: [3]

Video presentation (Youtube): [4]

Abstract

Test automation is common in software development; often one tests repeatedly to identify regressions. If the amount of test cases is large, one may select a subset and only use the most important test cases. The regression test selection (RTS) could be automated and enhanced with Artificial Intelligence (AI-RTS). This however could introduce ethical challenges. While such challenges in AI are in general well studied, there is a gap with respect to ethical AI-RTS. By exploring the literature and learning from our experiences of developing an industry AI-RTS tool, we contribute to the literature by identifying three challenges (assigning responsibility, bias in decision-making and lack of participation) and three approaches (explicability, supervision and diversity). Additionally, we provide a checklist for ethical AI-RTS to help guide the decision-making of the stakeholders involved in the process.

Cite

@inproceedings{Strandberg6239,

author = {Per Erik Strandberg and Mirgita Frasheri and Eduard Paul Enoiu},

title = {Ethical AI-Powered Regression Test Selection},

month = {August},

year = {2021},

booktitle = {International Conference On Artificial Intelligence Testing},

url = {http://www.es.mdh.se/publications/6239-}

}

Page belongs to Kategori Publikationer