Experience Report Suite Builder

This page gives a quick summary of two publications on a tool we implemented for regression test selection on a system level -- the tool is built into a continuous integration environment with nightly testing and so on.

The ISSRE version is available as PDF at IEEE Explore, [1], a preprint is available here: [2].

The IEEE SW publication is available as PDF at IEEE Explore, [3], or you can find a preprint at at the MDH website, [4]. A preprint is available here: [5]

Abstract

We propose a new method of determining an effective ordering of regression test cases, and describe its implementation as an automated tool called SuiteBuilder developed by Westermo Research and Development AB.

The tool generates an efficient order to run the cases in an existing test suite by using expected or observed test duration and combining priorities of multiple factors associated with test cases, including previous fault detection success, interval since last executed, and modifications to the code tested.

The method and tool were developed to address problems in the traditional process of regression testing, such as lack of time to run a complete regression suite, failure to detect bugs in time, and tests that are repeatedly omitted. The tool has been integrated into the existing nightly test framework for Westermo software that runs on large-scale data communication systems.

In experimental evaluation of the tool, we found significant improvement in regression testing results. The re-ordered test suites finish within the available time, the majority of fault detecting test cases are located in the first third of the suite, no important test case is omitted, and the necessity for manual work on the suites is greatly reduced.

Informal Summary

In two different publications -- "Experience Report: Automated System Level Regression Test Prioritization Using Multiple Factors" at ISSRE 2016 (available at [6]), and in "Automated System-Level Regression Test Prioritization in a Nutshell" (available at [7]) in IEEE Software nr 4 in 2017 -- we wrote about a tool that automatically selects test cases for nightly testing.

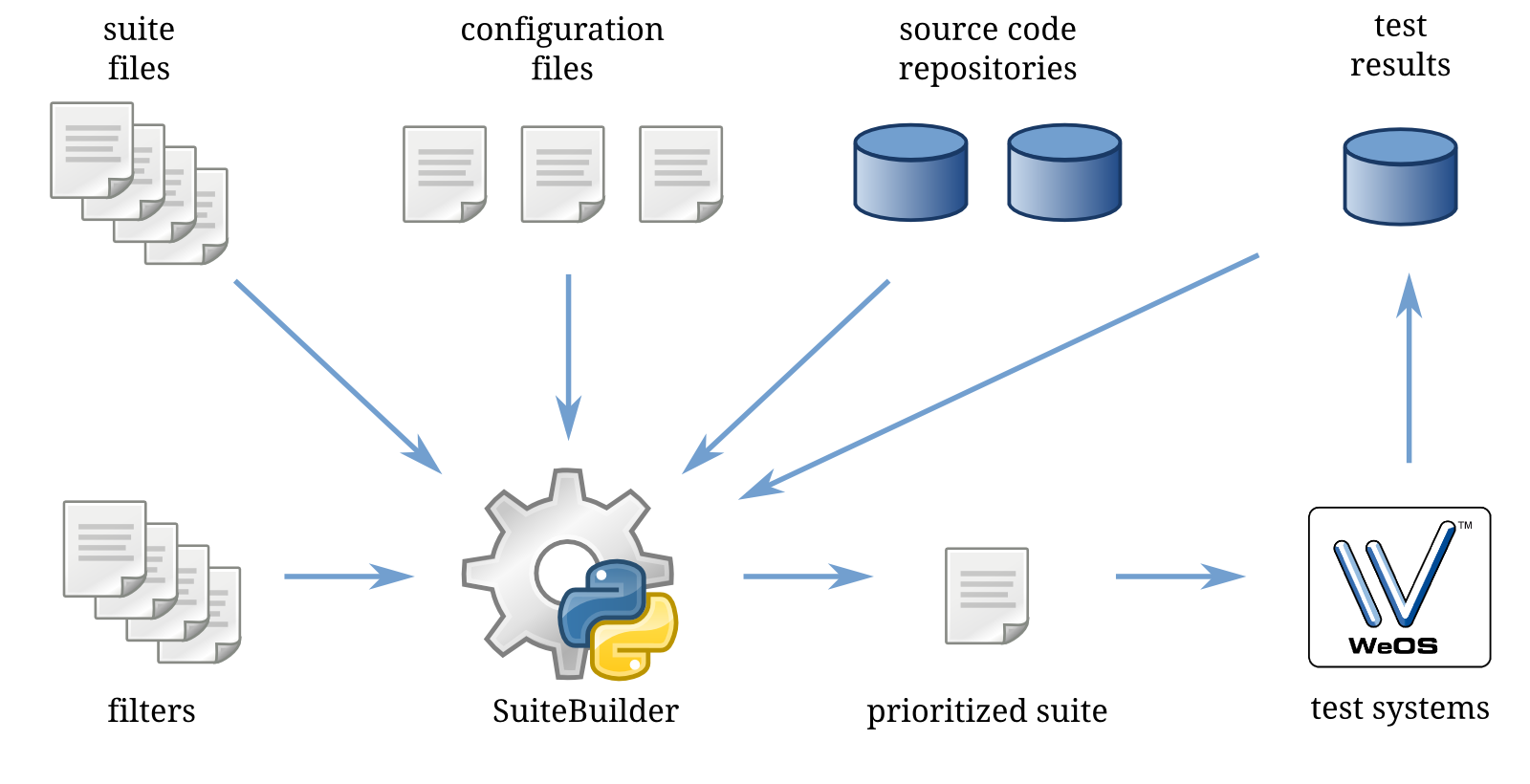

In the below image the overall flow is described. SuiteBuilder uses a system of prioritizers that are independent of each other. They are of three types: either hard coded, dynamic by reacting to source code changes, or dynamic by reacting to test results. Risky situations, like when a test case fails, have source code that is modified, and/or when someone has said that the test case is important, results in the test case being give a high priority and placed early in the test suite.

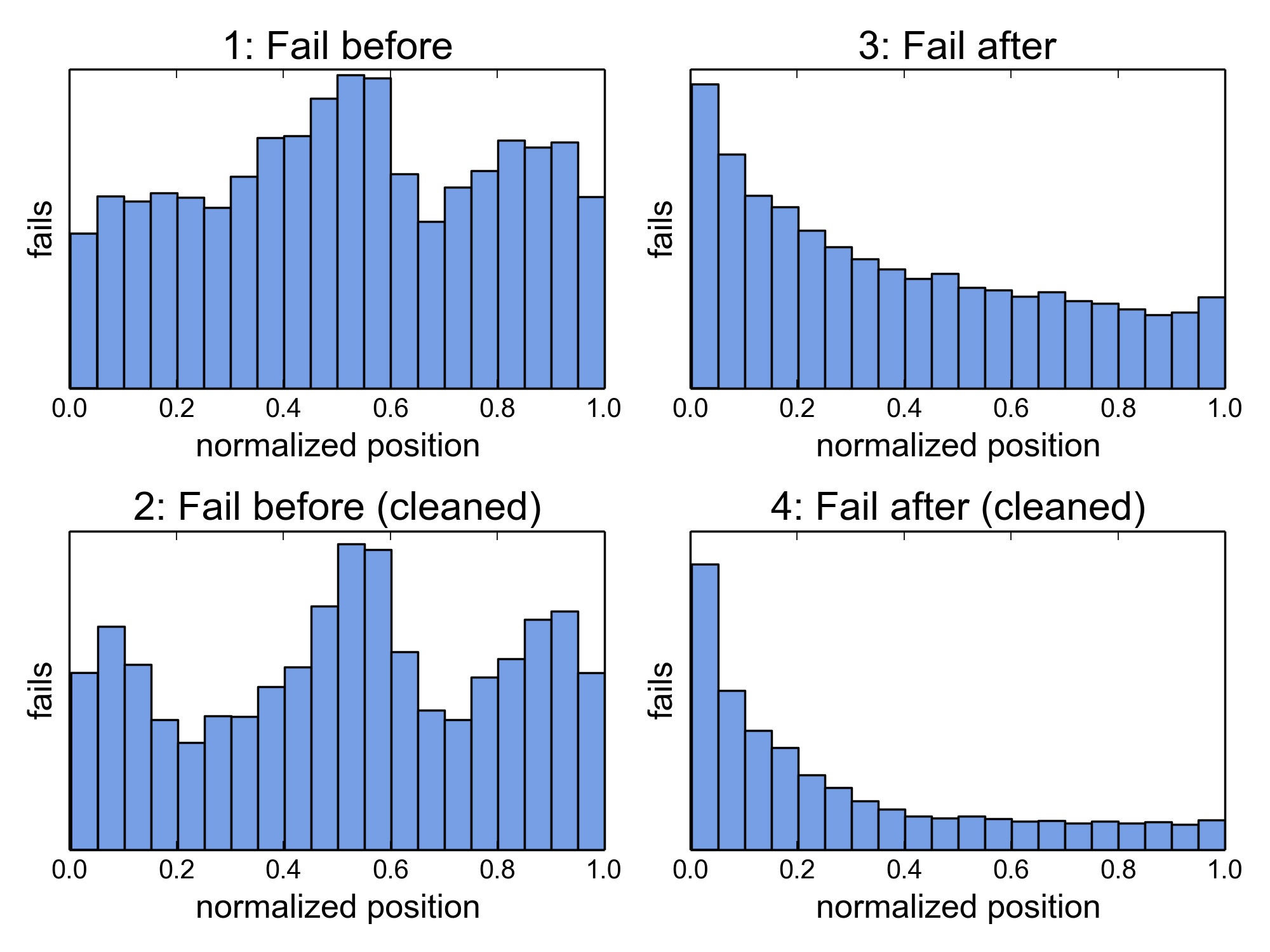

We did a thorough evaluation and found that failures were "all over the suite" before SuiteBuilder was implemented. Afterwards about two thirds of the failures were located in the first third of the suite. Sometimes we test very experimental stuff and a lot of test cases fail -- when we removed these suites then the 66%/33% scenario was achieved. Other problems were also addressed -- se the papers for details.

More details are available in the full publications. The paper in IEEE Software is a good starting place if you are not in academia -- if you want more details the ISSRE paper will provide that.

The papers are, unfortunately, not open access -- I hardly knew about it at the time, I'll try to do better in the future, I promise!

To cite the papers with BibTeX:

@inproceedings{strandberg2016experience,

title={Experience Report: Automated System Level Regression Test Prioritization Using Multiple Factors},

author={Strandberg, Per Erik and Sundmark, Daniel and Afzal, Wasif and Ostrand, Thomas J and Weyuker, Elaine J},

booktitle={Software Reliability Engineering (ISSRE), 2016 IEEE 27th International Symposium on},

pages={12--23},

year={2016},

organization={IEEE}

}

@article{strandberg2017automated,

title={Automated System-Level Regression Test Prioritization in a Nutshell},

author={Strandberg, Per Erik and Afzal, Wasif and Ostrand, Thomas J and Weyuker, Elaine J and Sundmark, Daniel},

journal={IEEE Software},

volume={34},

number={4},

pages={30--37},

year={2017},

publisher={IEEE}

}

Belongs to Kategori Publikationer